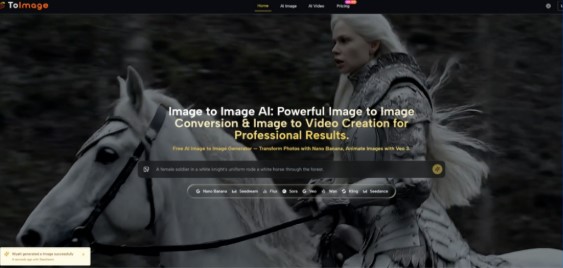

Unlocking the Potential of Image to Image Creation

In today’s fast-paced digital environment, visual creators and marketing professionals frequently face a daunting challenge: consistently producing high-quality, engaging content while managing strict budgets and tight deadlines. The traditional approach to visual asset creation often involves expensive photo shoots, prolonged post-production cycles, and a heavy reliance on specialized technical resources, which can severely stifle creative output. When creative momentum slows down due to these operational bottlenecks, Image to Image technology emerges as a compelling structural solution. By leveraging advanced deep learning algorithms, this approach fundamentally restructures how we think about visual production, allowing professionals to bypass conventional barriers and continuously deliver compelling visual narratives.

Transitioning from a blank canvas to a finished asset is traditionally the most resource-intensive phase of any project. However, adapting existing visual foundations into entirely new aesthetic directions changes the operational paradigm. This methodology allows creators to maintain their core conceptual assets while radically altering the artistic style, structural composition, or environmental context. By shifting the workload from manual pixel manipulation to strategic creative direction, teams can focus their energy on developing stronger underlying concepts rather than getting bogged down in the mechanics of execution.

Assessing Specialized Models for Visual Asset Transformation

In my testing of various underlying generation engines, it becomes evident that different architectural models exhibit distinct technical inclinations when processing complex transformations. Selecting the correct processing core is critical for aligning the final output with specific project requirements. For commercial initiatives demanding exceptionally high visual fidelity, specific models appear more stable when rendering intricate material textures, calculating accurate light dispersion, and maintaining realistic spatial perspective. These highly specialized engines consistently output visuals that meet rigorous professional photography standards.

Hyper Realistic Output and Context Aware Editing Precision

Beyond broad stylistic changes, the ability to execute surgical modifications on existing visuals represents a significant leap in utility. Context-aware editing architectures demonstrate remarkable competence when handling localized adjustments. Instead of relying on destructive editing techniques or cumbersome manual masking, these systems can precisely replace designated objects or introduce new typographic elements without disrupting the cohesive visual structure of the surrounding environment. This level of precision is particularly valuable when adapting brand assets for diverse regional campaigns.

Maintaining Character Consistency Across Complex Visual Sequences

One of the most persistent hurdles in serial visual production is ensuring that core character traits remain identical across varying scenes and stylistic interpretations. By utilizing multi-reference input mechanisms, creators can firmly anchor a subject’s facial geometry and physical attributes. This robust anchoring ensures that even as the environmental context or artistic rendering shifts dramatically, the central subject retains a recognizable and consistent identity throughout the entire visual sequence.

Establishing a Standardized Workflow for Visual Generation

To maximize the reliability of the generation output and minimize unpredictable variations, adhering to a structured operational methodology is essential. Based on the official functional sequence, the process of transforming visual assets follows these specific steps:

- Upload reference visuals into the system workspace, utilizing multiple reference inputs if character or style consistency is required.

- Configure the appropriate computational engine based on the specific artistic requirements, desired output resolution, or animation needs.

- Generate the outputs and utilize side-by-side comparison tools to evaluate the results, selecting the variation that best fulfills the creative objective.

Evaluating the Objective Capabilities of Transformation Engines

Understanding the precise technical boundaries of each available tool allows creators to make highly informed strategic decisions during the production process. The following objective comparison outlines the primary characteristics of the leading processing architectures currently available:

| Processing Engine | Core Technical Focus | Best Application Scenario |

| Nano Banana | Realistic Texture and Light | Commercial Photography |

| Seedream | Rapid Output Generation | High Volume Social Content |

| Flux Kontext | Context Aware Precision | Complex Editing Tasks |

| Veo Three | Native Audio Animation | Immersive Visual Narratives |

Understanding the Inherent Limitations of Generation Technologies

While the transformative potential of these tools is undeniable, maintaining a realistic perspective on their current operational boundaries is necessary for professional integration. The quality and accuracy of the final output remain heavily dependent on the structural precision and informational density of the text prompts provided. Furthermore, in my observation, achieving a highly specific visual result—particularly when attempting unconventional angles or blending contrasting artistic styles—often requires multiple generation cycles and iterative parameter adjustments. Creators should view these technologies as sophisticated collaborative instruments that augment human creativity, rather than fully autonomous systems capable of replacing experienced artistic judgment.